EMI-LiDAR: Uncovering Vulnerabilities of LiDAR Sensors in Autonomous Driving Setting using Electromagnetic Interference

EMI Vulnerability Overview

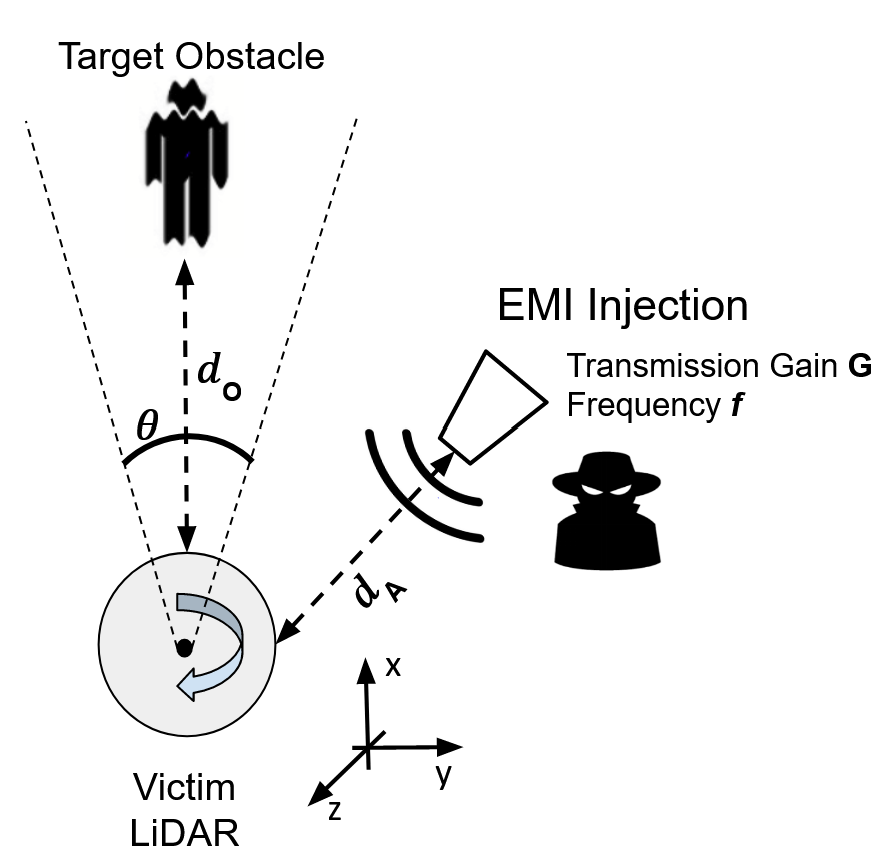

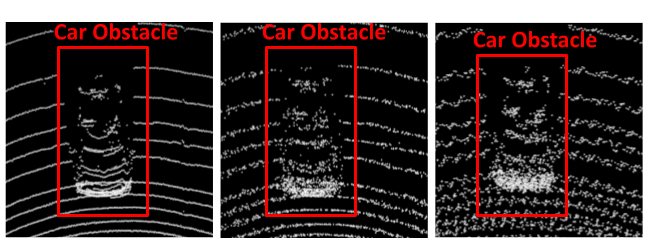

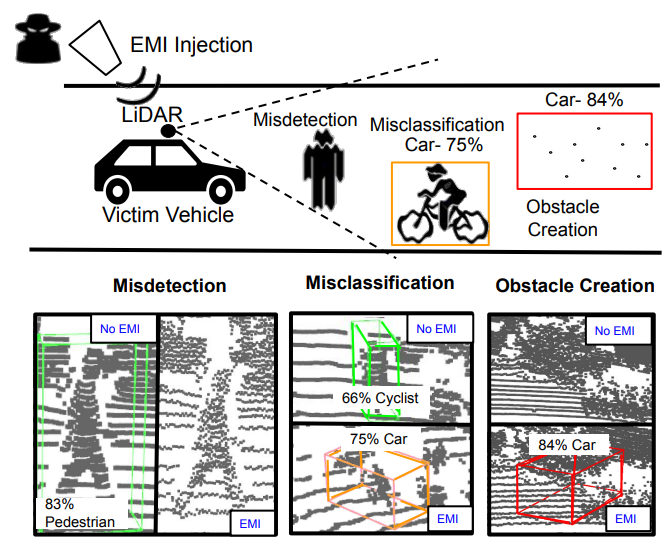

Autonomous Vehicles (AVs) using LiDAR-based object detection systems are rapidly improving and becoming an increasingly viable method of transportation. While effective at perceiving the surrounding environment, these detection systems are shown to be vulnerable to attacks using lasers which can cause obstacle misclassifications or removal. These laser attacks, however, are challenging to perform, requiring precise aiming and accuracy. Our research exposes a new threat in the form of Intentional Electro-Magnetic-Interference (IEMI), which affects the time-of-flight (TOF) circuits that make up modern LiDARs.

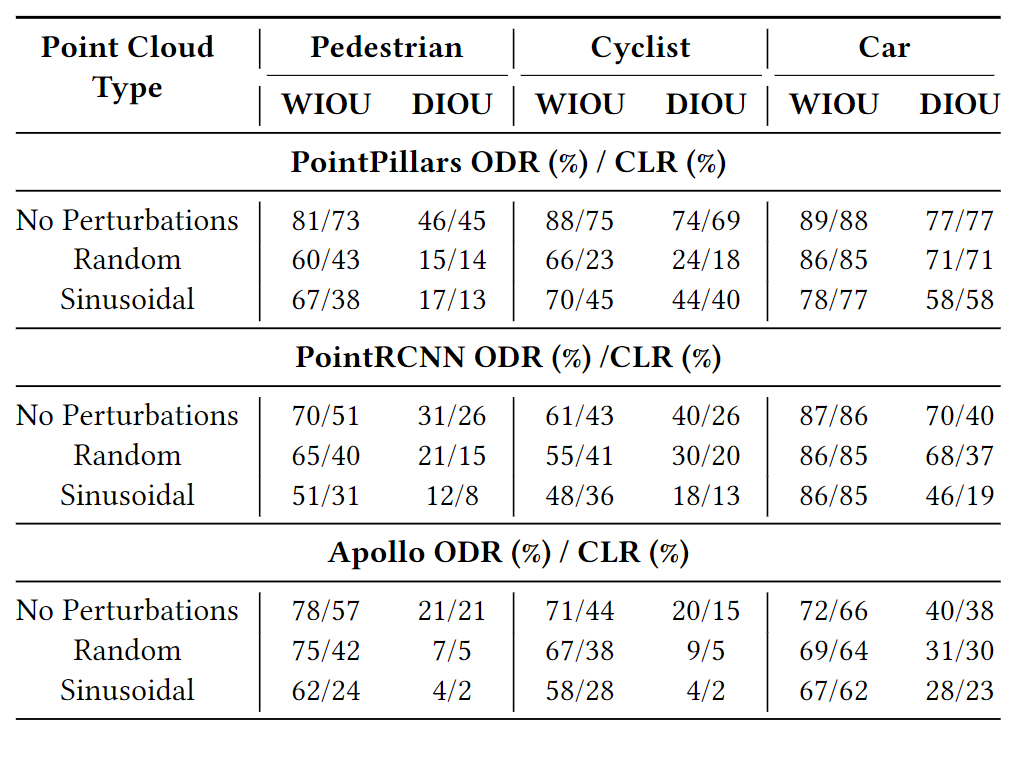

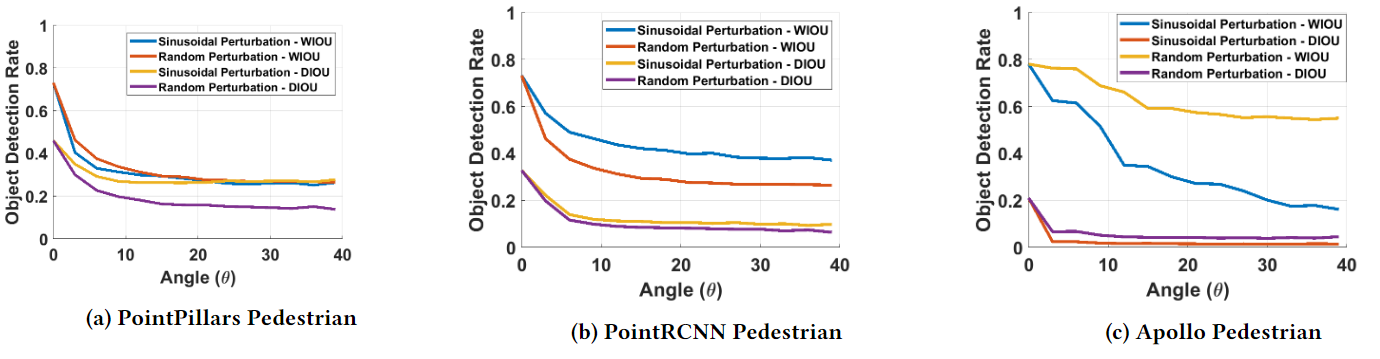

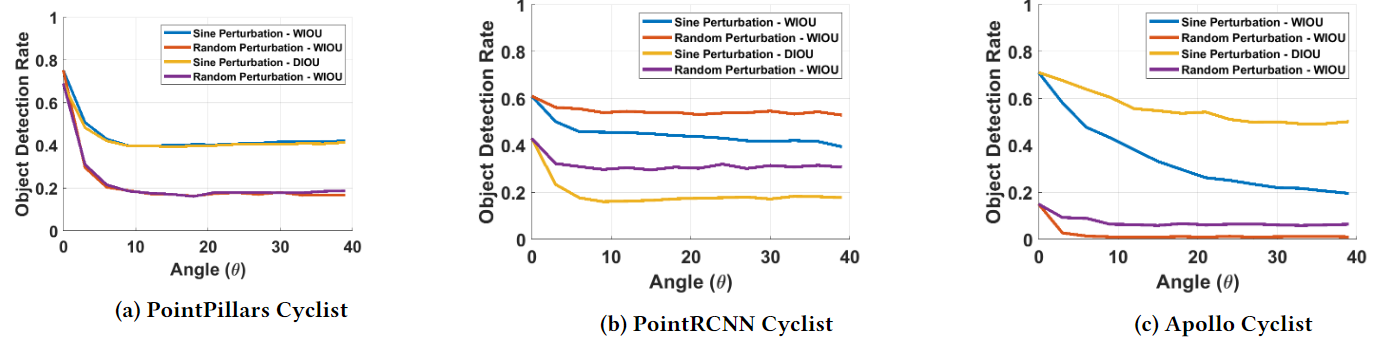

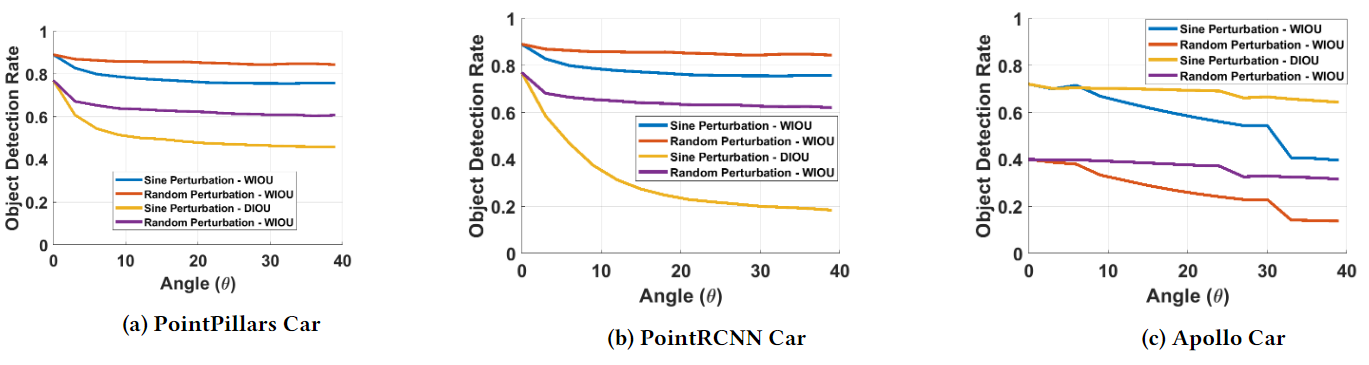

We show that these vulnerabilities can be exploited to force the AV Perception system to misdetect, misclassify objects, and perceive non-existent obstacles. We evaluate the vulnerability in three AV perception modules (PointPillars, PointRCNN, and Apollo) and show how the classification rate drops below 50%. We also analyze the impact of the IEMI injection on two fusion models (AVOD and Frustum-ConvNet) and in real-world scenarios. Finally, we discuss potential countermeasures and propose two strategies to detect signal injection.

This paper appeared in ACM WiSec 2023.

@inproceedings{Bhupathiraju23,

author = {Bhupathiraju, Sri Hrushikesh Varma and Sheldon, Jennifer and Bauer, Luke A. and Bindschaedler, Vincent and Sugawara, Takeshi and Rampazzi, Sara},

title = {EMI-LiDAR: Uncovering Vulnerabilities of LiDAR Sensors in Autonomous Driving Setting Using Electromagnetic Interference},

booktitle = {Proceedings of the 16th ACM Conference on Security and Privacy in Wireless and Mobile Networks},

pages = {329–340},

year = {2023}

}

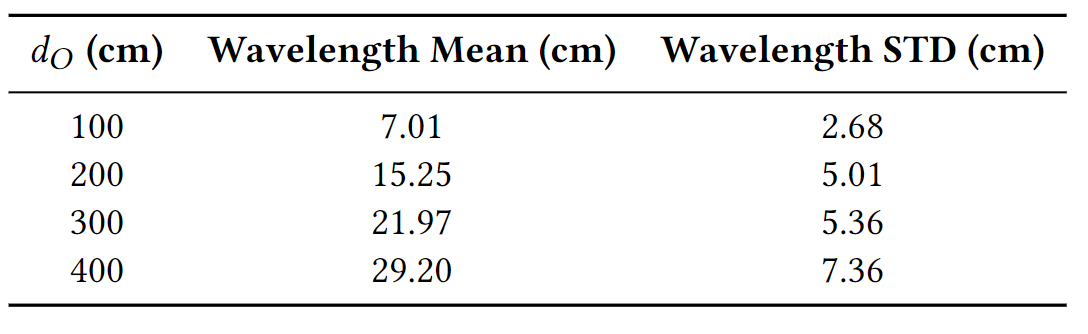

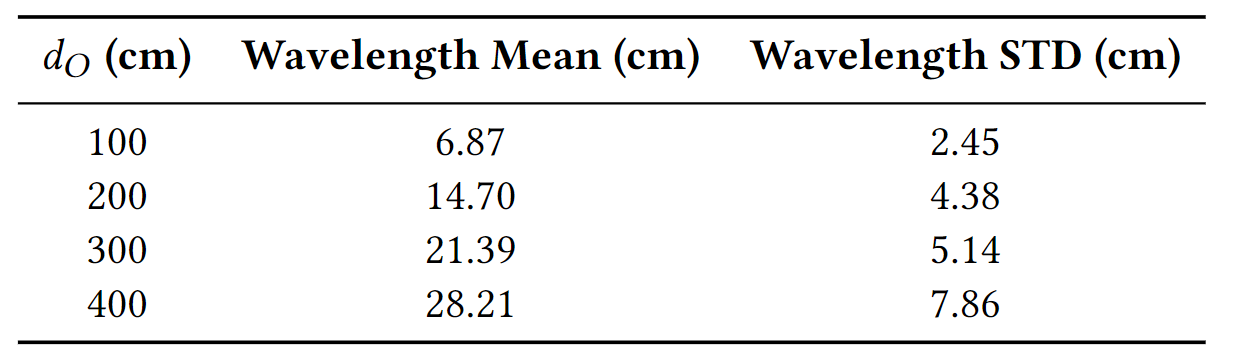

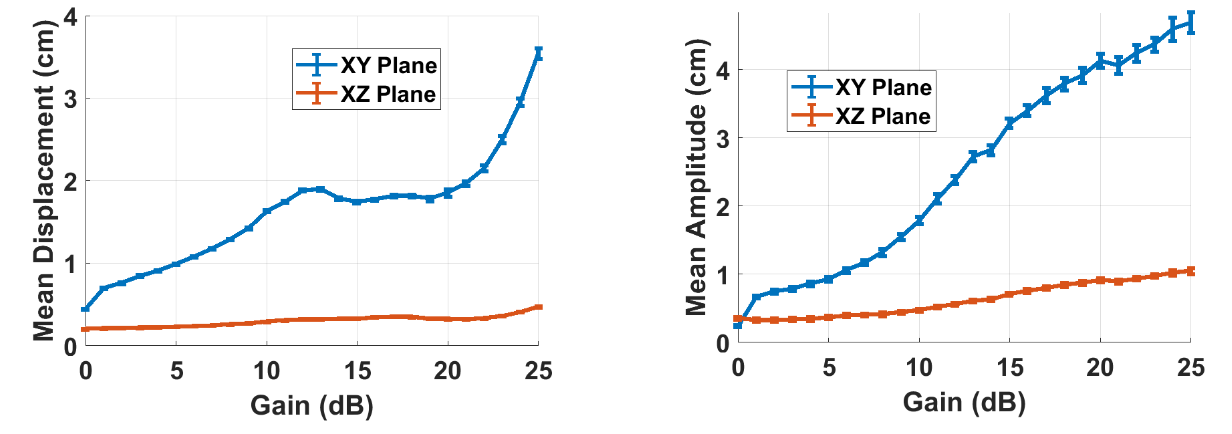

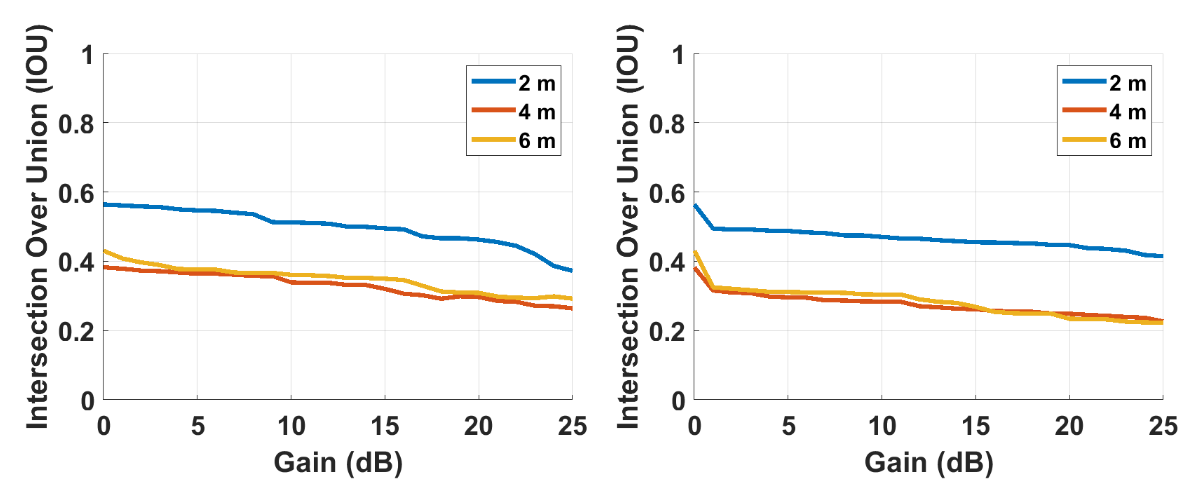

IEMI Vulnerability Characterization

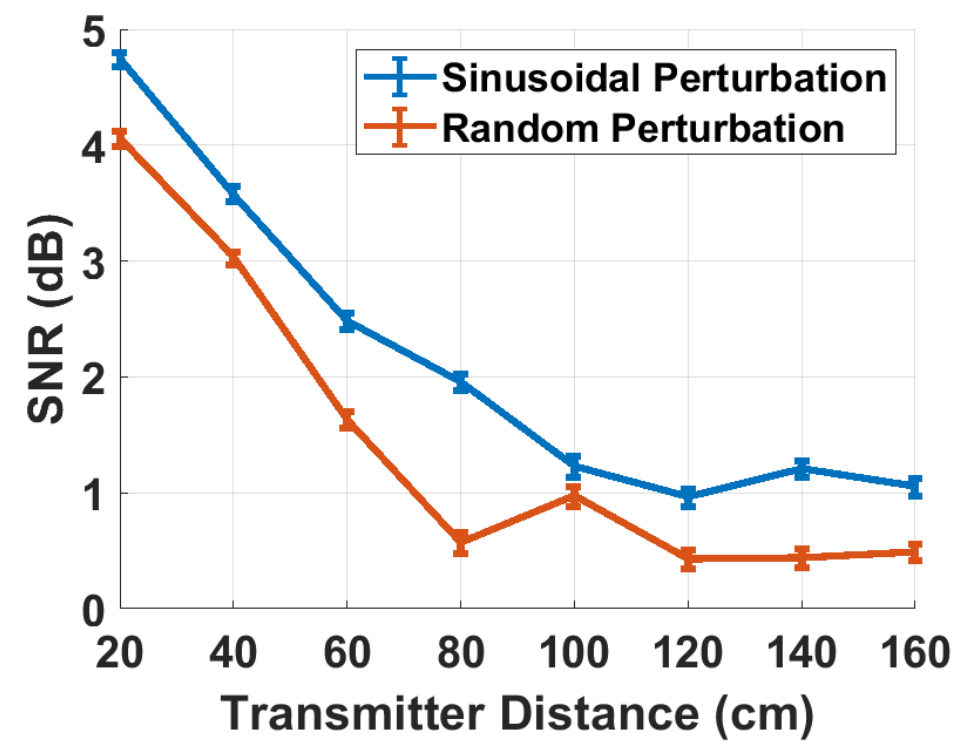

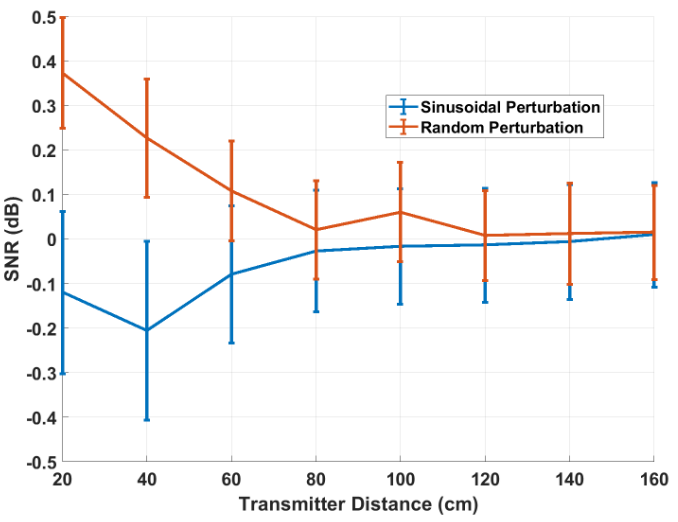

To determine whether a VLP-16 Velodyne LiDAR is vulnerable to IEMI, we perform a frequency sweep between 400 MHz and 1 GHz. We found that different frequencies produced different perturbation patterns. We hypothesize that the vulnerability is caused by EMI-induced voltages surpassing detection thresholds in the LiDAR's ToF circuits.